PDF(4747 KB)

PDF(4747 KB)

Discriminate dominant tree species in natural secondary forests from UAV hyperspectral images using a hybrid 3D-2D convolutional neural network

LI Hao, QUAN Ying, LIU Jianyang, BIAN Shaojie, WANG Bin, LI Mingze

Journal of Nanjing Forestry University (Natural Sciences Edition) ›› 2026, Vol. 50 ›› Issue (2) : 9-18.

PDF(4747 KB)

PDF(4747 KB)

PDF(4747 KB)

PDF(4747 KB)

Discriminate dominant tree species in natural secondary forests from UAV hyperspectral images using a hybrid 3D-2D convolutional neural network

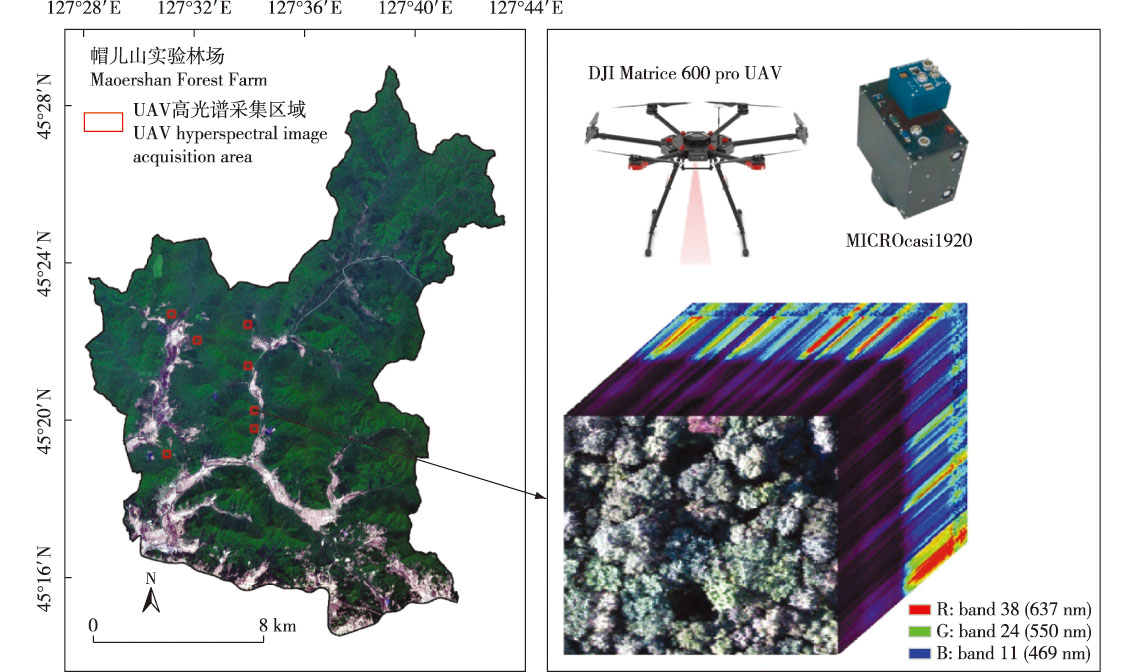

【Objective】This study aims to improve the classification accuracy of dominant tree species in typical natural secondary forests in northeast China, a convolutional neural network (CNN)-based framework for tree species classification using UAV hyperspectral images was proposed.【Method】Four dominant tree species—Fraxinus mandshurica, Juglans mandshurica, Ulmus sp., and Betula platyphylla—from the Maoershan Experimental Forest Farm of Northeast Forestry University were studied. Hyperspectral images of seven different regions were acquired using a novel UAV-mounted hyperspectral imager. A single-tree dataset with varying crown sizes was constructed using ground-measured data, divided into training and test sets at a 7∶3 ratio. A hybrid 3D-2D-CNN model integrating 3D and 2D convolutional layers was developed: 3D convolutional layers extracted spectral-spatial coupled features, while 2D layers captured detailed spatial features, enhancing the model’s holistic learning capability. The model was compared with 2D-CNN, 3D-CNN, and feature-selection-based machine learning models (random forest (RF), support vector machine (SVM), and gradient boosting machine (GBM)). Additionally, the band importance was analyzed using a progressive band removal method, and spectral feature sensitivity was investigated.【Result】The proposed 3D-2D-CNN model achieved a classification accuracy of 87% and an F1 score of 0.86 for the four tree species, outperforming other algorithms with an overall accuracy improvement of 5%-6%. Band importance analysis highlighted the significant contribution of the near-infrared band classification.【Conclusion】The 3D-2D-CNN model, by effectively integrating spectral and spatial information, significantly enhanced the classification performance of natural secondary forest tree species compared to traditional methods. This approach provides technical support for forest resource management and ecosystem monitoring via remote sensing.

natural secondary forest / tree species classification / hyperspectral image / 3D-2D-CNN model / convolutional neural network / machine learning / band importance analysis

| [1] |

|

| [2] |

吴见, 彭道黎. 高光谱遥感林业信息提取技术研究进展[J]. 光谱学与光谱分析, 2011, 31(9):2305-2312.

|

| [3] |

|

| [4] |

|

| [5] |

|

| [6] |

|

| [7] |

|

| [8] |

|

| [9] |

|

| [10] |

|

| [11] |

|

| [12] |

|

| [13] |

|

| [14] |

|

| [15] |

|

| [16] |

|

| [17] |

|

| [18] |

|

| [19] |

|

| [20] |

|

| [21] |

|

| [22] |

|

| [23] |

|

| [24] |

|

| [25] |

|

| [26] |

|

| [27] |

|

| [28] |

|

| [29] |

|

| [30] |

|

| [31] |

|

| [32] |

|

| [33] |

|

| [34] |

|

| [35] |

|

| [36] |

|

| [37] |

|

| [38] |

|

| [39] |

|

| [40] |

|

| [41] |

|

| [42] |

|

| [43] |

全迎. 融合无人机LiDAR和高光谱特征的天然次生林树种及林分类型识别[D]. 哈尔滨: 东北林业大学, 2023.

|

| [44] |

赵颖慧, 张大力, 甄贞. 基于非参数分类算法和多源遥感数据的单木树种分类[J]. 南京林业大学学报(自然科学版), 2019, 43(5):103-112.

|

| [45] |

|

| [46] |

|

/

| 〈 |

|

〉 |